Is Your Feeble PC Ready For VR?

A triple whammy of rendering rigour

This is not virtual. This is reality. The two big beasts of the coming VR revolution are lumbering into view. It's actually happening. By the end of April both the Oculus Rift and the HTC Vive VR headsets will be on sale. Things you can actually buy. Yes, yes, virtual reality has had several false starts. But this time, you can sense it. This time, it's different. Well, probably. Oh, OK, nobody knows how big an impact VR is going to have in the next few years. But what I can do is help you to understand how much PC power you're probably going need to get the most out of the new headsets.

TL;DR

- Traditional frame-rate performance metrics don't get the job done for VR

- VR is all about graphics performance and specifically latency

- The recommended requirements for the Rift very likely apply to the Vive, too

- Treat those requirements as minimum as opposed to optimal

- Things aren't looking good for middling GPUs that you may currently have installed

- But you probably won't need a new CPU

- VR rendering isn't quite the same as conventional 3D rendering and technologies like 'time warping' could help VR run well on less powerful hardware

- AMD and Nvidia look closely matched when it comes to VR optimisations

That TL;DR is too long

- For now, roll with the Oculus Rift recommendations for both headsets

- So that's Nvidia GeForce GTX 970 or AMD Radeon R9 290. Don't worry too much about your CPU

Also, this is not a Rift versus Vive post. For that, you'll want to pop over here.

A very real nightmare

First, let's be clear. If you deep dive into virtual reality, really get to grips with all the performance-relevant issues, this is a nightmare subject.

Forget about the old days of average frame rates or maybe minimum frame rates if you wanted to get fancy. In the brave new world of VR, everything is eleventy-three times more complicated. Welcome to API-event-to-draw-call latency. To time warping and late latching. To technojargon that makes your brain bleed.

That said, the subject mainly comes down to raw rendering performance. And VR is nothing if not a triple whammy of rendering rigour.

Doing VR well means fooling your wetware. For the most part, our brains are actually pretty keen to be fooled. Or rather our brains are keen to set up a coherent, working model of the world around us. But you do have to jump through certain hoops to prevent what you might call brain barf or, to botch a Blackadderism, the vomitous extramuralisation of sensory inputs.

That motion-to-photon thing, illustrated...

That motion-to-photon thing, illustrated...

For VR, specifically, you need high resolutions, you need high refresh rates, and you need low response times. That's the triple whammy. Bam, bam, bam.

Without getting bogged down in the science, at best any lag or choppiness is going to break the spell. At worst, it'll give you motion sickness. You can read all about the notion of 'motion-to-photon' and how latency is critical for VR in this Valve blog post.

But the immediate assumption is that you'll also need one hell of a graphics chip, right? In simple terms, yes. The additional CPU load generated specifically by VR isn't particularly onerous. So, it's mostly a graphics power problem. Do the rendering really quickly and the latencies tend to take care of themselves.

But VR is also more than simply pumping an existing 3D engine into a headset. And that has implications for how much PC power you'll need to pack.

Those new headset specs in full

Hold that thought. First, let's tear through the known speeds and feeds for the new headsets and what it all means for your poor old GPU. A bit like games consoles, VR involves the science and engineering of the possible. Inevitably, therefore, they look very similar in terms of the key performance-critical specifications. Which are thus:

- 1,080 by 1,200 pixels per eye or 2,160 by 1,200 total resolution

- 90Hz refresh

VR courtesy of Facebook? There's a gag in there somewhere about life, art and virtual reality. Make one up for yourself

VR courtesy of Facebook? There's a gag in there somewhere about life, art and virtual reality. Make one up for yourself

Oculus was initially and inexplicably cagey about those numbers, but as far as I am aware they are now official. That said, unlike HTC, Oculus has been kind enough to provide minimum required PC specifications, the highlights of which are:

- Nvidia GeForce GTX 970 / AMD Radeon R9 290

- Intel Core i5-4590

- 8GB RAM

Given the identispec resolution and refresh, there's not much initial reason to expect the minimum requirements for Vive to be terribly different.

For both headsets the resolution works out to 2.5 million pixels. For reference, plain old 1080p is just over two million pixels. So that's 25 per cent more rendering load over 1080p in terms of the plain pixel count. For further reference, the popular 27-inch 2,560 by 1,440 display format translates to 3.7 million pixels.

If that was as far as things went – 25 per cent more pixels versus 1080p - you wouldn't need all that much GPU. Most current mid-range GPUs could handle conventional desktop gaming on an effective 2,160 by 1,200 pixel display.

Having more than one player in the VR game is going to be critical...

Having more than one player in the VR game is going to be critical...

Nope, the problem is the additional refresh and response hurdles. Superficially, 90Hz refresh puts both headsets 50 per cent higher than a standard PC monitor. So now we're well on the way to twice the rendering load of common-or-garden 60Hz 1080p.

Except it's not as simple as 60Hz versus 90Hz. If you set a target of 60Hz or 60 frames per second on a regular PC monitor, the consequences of dipping below 60 aren't automatically disastrous. You often won't really notice the odd dip into, say, the 40s.

Not so for VR. This problem is intimately linked to response or latency. In other words, how long it takes the display system to react to your inputs, which in VR terms mostly means head movements. If the movement of the world you see perceptibly lags your head movements, the whole thing comes tumbling down. It's that motion-to-photon thing I mentioned earlier.

Can we have some numbers, please?

Extrapolating from the pixel counts and refresh rates is a start. But what we really need are metrics that include latency.

It's early days for measuring that aspect of VR rendering performance. If you want some wider reading, here are a couple of articles you might want to look at. First is the Tech Report's round up of what you might call marginal VR-capable current graphics cards, from the Nvidia Geforce GTX 970 and down.

This is where VR starts according to Nvidia and Oculus

This is where VR starts according to Nvidia and Oculus

To cut a long story short, my hunch is that you want Tech Report's '99th percentile frame time' metric to read under 20ms and probably nearer 10ms for a nice VR experience (10ms roughly equating to 90Hz refresh). TR's tests in that round up are split across 1080p and 2,560 by 1,440 resolutions. But overall, it doesn't look terribly pretty for the GTX 970, let alone any of the weaker cards in terms of frame rendering latencies.

Put another way, it looks like even with a 970, you're going to need to compromise on the eye candy to get really comfortable VR-friendly frame rates. You can also refer to this Oculus blog post for their take on the rendering demands of VR. It claims the loads are roughly 3x that of driving a conventional 1080p PC display.

A further interesting peep into the future of VR performance is this preview of FutureMark's upcoming VRMark benchmark. If you can't be bothered to read it yourself, and I don't blame you, what you would have learned is as follows. Firstly, AMD and Nvidia are closely matched for latency and indeed some aspects of latency aren't directly proportional to raw GPU power. Secondly, per-eye latency (ie left eye versus right eye) can differ pretty dramatically when using a single screen split between two eyes instead of two fully independent displays.

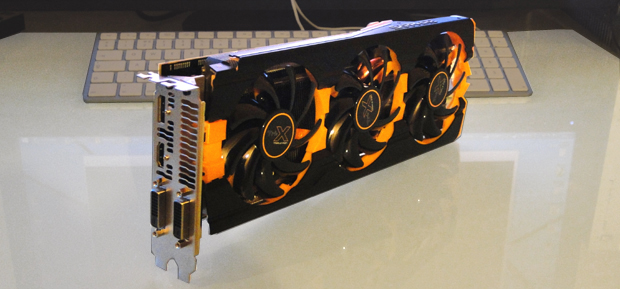

The Radeon R9 290 is the AMD lift-off point

The Radeon R9 290 is the AMD lift-off point

Two independent displays is clearly desirable, but my understanding is that, for now, the new headsets use a single screen split via optics, just like the development kits. But I have failed to find absolutely definitive info on that. Like I said, this is a nightmare subject (shout out below if you know something I don't on this bit, or anything else of relevance for that matter).

So, I do need that ridiculously expensive GPU, then?

Probably, but there are two possible mitigating factors. The first is that I reckon frame rate and response are more important than image fidelity – eg things like texture quality – for VR. Crush the eye candy settings and more modest GPUs could well be workable. We shall see.

The other saving grace could involve VR-specific rendering technologies like 'time warping' and 'late-latching'. Say what? Time warping is essentially a low-overhead kludge for approximating some extra 'fill in' frames to improve performance. And late-latching is basically a clever way of optimising the rendering queue without introducing lag.

If you want to deep dive into the time warp, this YouTube vid explains how it works. For late latching, go here.

And what of AMD versus Nvidia?

The final question is ye olde AMD versus Nivida debate. Inevitably, both companies are bigging up the VR prowess of their graphics products. Nvidia has its Geforce GTX VR Ready thing, which tells you which card to buy (GTX 970 and up, no surprise), its VR Direct brand for VR-friendly technologies and then the Gameworks VR programme to help game developers with the VR challenge.

Will the VR rendering load turn out to be Titanic?

Will the VR rendering load turn out to be Titanic?

As for AMD, well, props for condensing all of its VR efforts under the singular LiquidVR umbrella. But as you can see from the VRMark preview story linked above, the early data don't seem to favour either vendor. For more discussion on AMD versus Nvidia regards VR and some additional links, this Reddit thread is as good a place to start as any.

Just tell me what to buy

Like I said, this is a nightmare subject. The minimum specs quoted by Oculus are, ultimately, as good as it currently gets. So, I could have saved myself a lot of bother and you a lot of reading by just linking them. Which I did. At the top. So it's not my fault if you still ploughed all the way through. But you do understand why those minimum specs are precisely as they are a little better now.