Statespace: the neuroscientists who want to improve your gaming skills

The science of skillz

With the proliferation of esports and the potentially huge sums of money involved if you make it to the top tier in some games it's not surprising that there are so many third-parties carving out a niche by offering to help improve your performance. One which caught my eye recently was Statespace [official site] which is being set up by Wayne Mackey and Jay Fuller. The pair have left their academic research positions at New York University to focus on this neuroscience-based tool for developing the skills associated with competitive gaming.

The software and the first product, Aim Lab, is only just launching into beta and this is hardly the first email I've had about a project aiming to help aspiring pro players improve their gaming BUT I had so many questions when faced with the idea of what Fuller suggested - "you could think of it like the NFL Combine or perhaps FitBit for gaming" - that I couldn't resist finding out a bit more:

First here's the proposal as laid out in the Statespace summary:

"Our mission is to improve training conditions in the eSports space by introducing objective measures of skill based on decades of validated science. As avid gamers ourselves, we’ve seen players at various levels (professionals and hobbyists alike) look for ways to improve their skills, much the same way athletes from traditional sports train to gain a competitive edge.

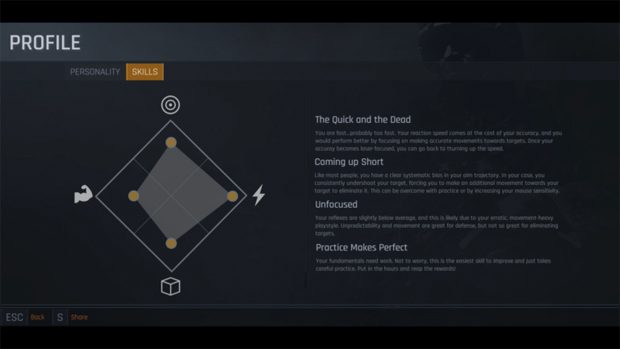

"Our first product, Aim Lab, assesses a gamer’s neurocognitive skills (e.g, visual acuity, decision-making, reaction time, hand-eye coordination). The platform looks and feels like a classic first person shooter game, but under the hood it runs experiments to assess the player’s skills. Our A.I. identifies player weaknesses and spawns custom practice scenarios to train the player more efficiently. In this way, we can help players get better, faster by serving as a personal trainer."

The idea is that, in being able to break down game performance and see data assessing specific skills, players would be able to see the areas where they need to improve and thus reap the benefits of those improvements in competitive games.

Obviously there's a lot to unpack there - how the breakdown of skills was decided, whether it's even possible to measure the impact of the assessments/training on the game a person wants to improve at, whether this is purely for technical skill assessment or if the team is hoping to address emotional or psychological elements of play...

With that in mind here's our emailed Q&A:

Pip: How did you decide on ththe skills to focus on (there are so many different elements and skills which contribute to success in esports, plus the combinations needed vary across the games being played and the styles within those)?

Jay Fuller: We are focusing on the fundamental skills that bridge most if not all games. Not all games will require all of these skills, but they will include at least one of them. The fact that there are vast differences between games and between character archetypes within the same game fascinates us and is another reason our unique data will be insightful. To elaborate, in traditional sports, the ability to run fast would correlate well to success in many sports (e.g., football, basketball, soccer, etc). Conversely, upper body strength may be more relevant to football success than soccer. Even within football, arm strength may be of greater importance for certain positions (e.g., quarterback, lineman) than others (cornerback). Nevertheless, the ability to test players of all skills on the same metrics (as they do with the NFL Combine) is helpful for players and coaches alike to refine training.

Now onto gaming, in first-person shooter games, reaction-time and fine motor control will be relevant to most titles, but excellent visual acuity may be especially relevant to a game like PUBG where you are trying to detect subtle motions in a large open environment. Finally, it is important to note that the skills I listed are examples and not set in stone. The best skills to ultimately include in the platform is an empirical question and one that will be guided by combining our R&D expertise with feedback from beta testers.

Pip: Are there specific games where you think your approach is better placed to improve performance than others?

Fuller: We are currently focused on FPS titles because they require the most mechanical skill, but are moving into MOBA genres next, which as you mention in [the previous question], does require a different skill set and context, but yet, still skills we measure in neuroscience (i.e., adaptability, decision-making, etc). Ultimately, comparing skills between genres of games will be a fascinating area to explore within our combined data set.

Pip: Given the specificity of some of the skills needed to excel in a game (knowing particular combos or timings or matchups or any number of other things) how would you guard against someone using your program to improve but only getting better at playing your specific training scenario?

Fuller: Consider training in stick-and-ball sports. In football, players often lift weights to train, they don't just play football all day long. While it's true that this means players may end up getting better at exercises like the bench-press (which has its own skill to it), they still end up getting objectively stronger because they are targeting that attribute, and that strength leads to better performance on the field.

In eSports, strength may not matter as much, but attributes like perception, decision-making and hand-eye coordination certainly do: light and sound come into the brain, this information is processed, and a flurry of buttons are pressed in a fast and coordinated sequence. If there is a weakness anywhere in that loop, then performance will be affected. Esports players need methods to identify their weaknesses and train them. As neuroscientists, we will provide measurement techniques (experiments) that assess skill, and then we will process that data to provide optimal feedback in order to maximize learning. Think of us as what physiologists are to traditional sports: sports scientists.

We are creating a specialized environment in which we can measure and train skills that are not only game agnostic, but agnostic across real life tasks. For example, as humans, we have unique visual biases in which we may see better to the left than the right. This exists whether you are driving a car, playing baseball, or playing Overwatch.

Pip: You mention the NFL Combine but that is a specific, official NFL event with direct consequences for drafting and salary and so on. Given Statespace and Aim Lab would be third-party software how are you approaching the esports space? Is it a case of approaching amateurs who want to become pros first and trying to grow from there or are you in talks with organisations/specific games, or are you trying something else?

Fuller: We're working to help players of all levels improve their technical skills. Our first offering, Aim Lab, will be rigorous enough to be used by pro, semi-pro, and college teams, but approachable enough to be used by amateurs. Of course, there are many more amateurs, so our primary outreach efforts have focused there. We are also in conversations with several teams and colleges to help address their unique data needs.

As for drafting, salary negotiation, recruitment: while we're initially focused on training, we hope our data will be additive to the existing recruitment process for teams. Data on player skill can help players and teams of all levels make better decisions. Numbers won't tell the whole story on the potential of a particular player, but they will be another factor for decision makers in the eSports industry to use.

Pip: The other thing is that you've spoken about assessing skills and working on ways players might develop them with the intention of improving in competitive games as a result of those changes, but what is there to measure that impact?

Fuller: We will be correlating our data on to the data made available to us from games. That metric won't tell the whole story as there are a lot of factors that go into game performance beyond technical skill (strategy, team work, emotional influences), but it will be one way in which to evaluate the impact of Aim Lab. Qualitative data will also be used. Feedback from players and coaches will be critical to our research and development team to make the best product possible. We're really looking forward to feedback from the gaming community.

Pip: I'm asking that previous question because I want to know whether there is any specific research you've done (or which you can link me to) which demonstrates that those improvements in the skills you've isolated are transferring to games?

Fuller: We have studied many aspects of neuroscience: motor control of reaching and pointing movements, eye-movements, memory, working memory, perception, decision-making, etc. Name an area of cognitive neuroscience, someone on our team has studied it or is well-read on the topic. The cognitive neuroscience experiments we run in the lab are in fact just custom video games built to test specific aspects of the brain. During these experiments, when you give participants richer feedback on their performance, they learn quicker. If that sounds unsurprising, it should be. The hard part is getting good data to present back to the participant. In order to do so, you have to setup the experiment in a way that accurately as possible measures the attribute of interest. As neuroscientists, that's our area of expertise. With Aim Lab, we're bringing that expertise directly to gamers.

Pip: Relatedly, I'm interested to know whether/how you'll be tracking the effects of using Aim Lab on playing those competitive games. (I ask because relying on players' self-reporting will be so vulnerable to things like confirmation bias and because some changes are potentially hard to quantify).

Fuller: Yes, we'll be correlating data from Aim Lab to data available from common competitive games. You're correct in that some changes will be difficult to identify because success in gaming is a combination of so many factors, some of which are measurable, and some which are not. However, accurate and rich data will help players, coaches and the media to evaluate player performance with more objectivity.

Perhaps looking to an example from traditional sports would be helpful for context here. In baseball, the introduction of radar guns in the '60s allowed for the measurement of pitch speed. Being able to objectively tell the difference between a 95 mph pitch and 85 mph pitch allows players to train/track their ability and coaches and media to objectively measure talent. Now, just because a player can throw 95 mph doesn't mean he'll have a better career than the guy who throws 85 mph. That said, pitching speed is known to contribute to success, so people in the industry like to have that number to factor into their decision making. Similarly, with Aim Lab, we want to empower players, coaches, and media with more objective information.

Pip: Finally, are there any elements in your software which focus on emotional self-regulation or related areas? I appreciate you seem to be targeting technical skill and performance but esports success is frequently also about functioning as part of a team and not, for example, tilting when things go awry.

Fuller: We are focusing on technical skills for now as those skills are more feasible to objectively measure as a first step. We want Aim Lab to be another training tool in a player's arsenal. It won't replace playing the actual game, it won't replace the coaches who help with strategy and management, it won't replace psychologists who help with the emotional aspects like tilting - Aim Lab metrics and training will be additive to all of those existing player support systems. It's the ultimate tool for exercising and evaluating game relevant technical skills.

Pip: Thank you for your time.

The venture has a $500,000 pre-seed round of financing from a start-up studio called Expa (Expa being a project founded by Garrett Camp, co-founder of Uber and StumbleUpon and invested in by a number of other business/tech figures like Virgin's Richard Branson, Foursquare's Naveen Selvadurai and so on) and some angel investors, so it sounds like Silicon Valley is keeping an eye on the idea.

I've also been digging around on the Statespace website since it went live and there's an interesting piece in the article on Plunkbat (Plunkbat being The Artist Usually Known As PlayerUnknown's Battlegrounds) and peripheral vision:

“We found that your peripheral vision is important for taking in the gist of a scene and that you can remove the central portion of an image, where your visual acuity is best, and still do just fine at identifying the scene,” Adam Larson, K-State Master’s student in psychology. If we take a closer look and try to gain a better understanding of our vision, we can then translate that into how it affects our games and how to use it to our advantage.

We essentially have two important types of vision; peripheral and central. Central is exactly what you think it is; what our eyes are centering on and the main focus of what we are looking at. In a game like this, we scan around the map and buildings at all times to look for any indication of someone being around or right in front of us. Peripheral is the part of our vision that occurs outside the very center of what we are looking at. Peripheral vision is often used to get the gist of a situation or area. For instance we will know that we are in the military base just by being in it, our central vision can be scanning around for enemies and items while our peripheral instantly lets us know where we were.

Now for the interesting part, our peripheral vision is actually better at detecting motion than our central vision. This is super useful in a game like PUBG since any sort of movement is a huge indicator to death being seconds away.

Statespace and Aim Lab are intended for a Q2 2018 release via Steam (so late spring/early summer for the non businessy Northern Hemisphere-ers among us) but the beta started on 13 September 2017. There's a signup sheet here if you want to give it a whirl.