Update: AMD's new graphics and CPU awesomeness

To be this good takes Vega

It’s all kicking off at AMD, peeps. The new Vega graphics chip is now more than merely a press release and has finally been released into the wild. Meanwhile, the insane ThreadRipper CPU with 16 cores and 32 threads has also landed. It’s all a far cry from just a few months ago when AMD was soldiering on with an elderly graphics product and a deadbeat CPU line up. Time to catch up with AMD’s latest hardware awesomeness.

To quickly recap, AMD has wheeled out its latest graphics tech in high-end form. That means a trio of pretty pricey cards aimed at the enthusiast end of the market. We’re talking $400 / £400 minimum, or thereabouts.

You can bone up on the speeds and feeds here. But the basics involve three cards based on the same underlying graphics chip, albeit with varying specs, namely the new Radeon RX Vega 56, the Radeon RX Vega 64 and the Radeon RX Vega 64 Liquid.

AMD is styling this new graphics tech as the fifth generation of its long-established GCN architecture, but this time it’s purportedly all change. AMD says the main compute units that contain the brains of the graphics processing hardware have been redesigned from the ground up. AMD has also apparently pinched some features from its new Ryzen CPUs including high speed SRAMs and the clever Infinity Fabric interconnect.

That’s all great, but whatever AMD has done it isn’t immediately translating into a big uptick in actual game performance from an architectural point of view. Not across the board, at least. Often it performs pretty much as you’d expect of any old GCN graphics chip from AMD given the headline shader count and clockspeed specs of the new boards. But hold that thought.

My understanding is that much of the increased transistor count in the new Vega GPU versus the old Fiji chip found in the Radeon R9 Fury boards has been spent on enabling higher clockspeeds through deeper pipelines and other related features. In other words, not on adding computational hardware.

However, if Vega does have an on-paper strength it’s the potential for performance in the most advanced game engines. The specific special sauce here is the so-called Rapid Packed Math feature which essentially enables a high-speed floating point computation mode that comes at the cost of accuracy.

As it happens, the last word in precision isn’t necessary for graphics rendering much of the time. The upshot of which is that this high speed mode should be a boon for handling advanced lighting calculations, HDR rendering and all that jazz. As yet, it’s a somewhat theoretical feature as no games currently support the Rapid Packed Math stuff, but titles are reportedly incoming.

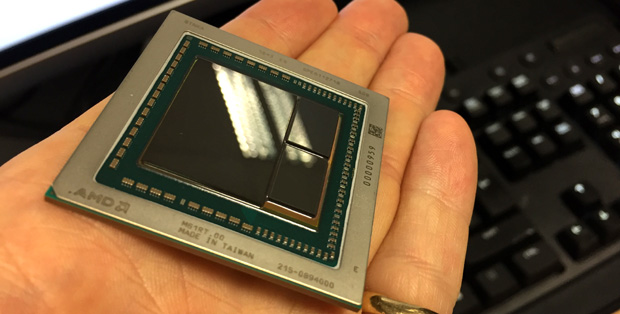

Could it be true that here in my mortal hand I do hold a nugget of purest graphics?

Could it be true that here in my mortal hand I do hold a nugget of purest graphics?

In the meantime, the yardsticks that matter are games you can actually play right now and when you boil down all the benchmarks, the overarching narrative to Vega’s performance goes something like this. If we’re talking about the air-cooled Vega 64 board, it generally falls somewhere in between an Nvidia GeForce GTX 1070 and a 1080. In older games, it’s closer to the 1070. In newer games, make that the 1080. There are of course exceptions, with Doom being an obvious example where the Vega 64 beats the 1080 fairly comfortably.

But as a broad picture, the "1070-ish in older games, 1080-ish in newer games" thang is a reasonable rule of thumb for where Vega 64 (air) currently sits. What that doesn’t capture, however, is power draw. Normally, it’s not something I care a great deal about. But this new GPU is extraordinarily power hungry, sucking up as much as 150W or more above a GTX 1080 under full load.

If that matters it’s because it indicates AMD is likely running the chip right at the ragged edge in terms of clockspeeds and voltages, which isn’t terribly desirable. That said, it may put off the cryptocurrency mining crew for whom the cost of feeding the board with all those watts will be off putting. And that should at least help keep prices in check.

Speaking of the sordid matter of money, pricing of the first high performance Vega boards is high enough to make them irrelevant to most of us even if they stick close to the recommend retail stickers of $499 and $399 for the Vega 64 and 56 respectively (UK prices in £ won’t be much if at all lower, I suspect). So, in many regards, what really matters is the indication these first boards give about what we can expect from more mainstream Vega-based graphics boards in the coming months.

That doesn’t look hugely promising from where I’m slouching. The chip used in these first Vega cards is absolutely massive and it struggles to compete with a fairly old and much smaller second-rung Nvidia GPU in the form of the GP104 item found in the GTX 1070 and 1080 boards.

It’s hard to see how a much smaller GPU based on this Vega architecture is going to radically shake up the space immediately below the 1070. The possible exception to that is if AMD manages to pull off something really special vis-à-vis clockspeeds. But that’s pretty speculative.

All in all, then, I’m not saying Vega is a disaster. But it does feel like a GPU design that is waiting for games to catch up and I’m not convinced that’s a terribly good thing. Nvidia is brilliant at making GPUs that work great in the here and now and the problem for AMD is that a GPU isn’t for ever. It’s barely for next year.

So performance today is ultimately more important than potential for tomorrow. Whatever, one thing I am sure about is that Vega isn’t as good as AMD would have liked. Not by a fair old whack.

Happily, that isn’t something you can say about its new Ryzen CPUs. For the most part, Ryzen must at least match and probably exceed AMD’s hopes for a new CPU architecture.

Fly my pretties! 32 threads. Count 'em!

Fly my pretties! 32 threads. Count 'em!

It’s a pity for us that the one area where Ryzen isn’t a complete smash hit is games. But it’s good enough most of the time in current games and I reckon it will get significantly better over time as developers get to grips with its strengths and weaknesses. It’s a CPU that’ll be around for a long time in some or other form, so I’m confident doing that work will be seen as worthwhile.

As for the new 16-core ThreadRipper chip, well, it’s really a technological curiosity rather than a realistic proposition for gamers. But I couldn’t help taking it for a quick spin anyway. For the most part, it’s pretty much Ryzen redux in most games. But a brief zap in Total War reveals a level of chronic stutteriness that’s even worse than the more mainstream Ryzen chips.

It’s a very expensive chip, so that arguably makes its gaming performance somewhat academic. But the stuttering was certainly bad enough that it would put me off bagging a ThreadRipper for now, if I had the inclination to spend that kind of money on a CPU. Which I don’t.

Overall, it’s interesting times, far more so than a mere 12 months ago. I do rather wish Vega was a stronger competitor. But it’s probably just good enough to keep Nvidia on its toes rather than sand-bagging, Intel style. Moreover, if Ryzen sells as well as it deserves to, AMD should have some money to plough back into the graphics business and give Vega the polish it probably needs.