What Sony's PS5 specs tell us about AMD's Big Navi cards

16GB of GDDR6, here we come

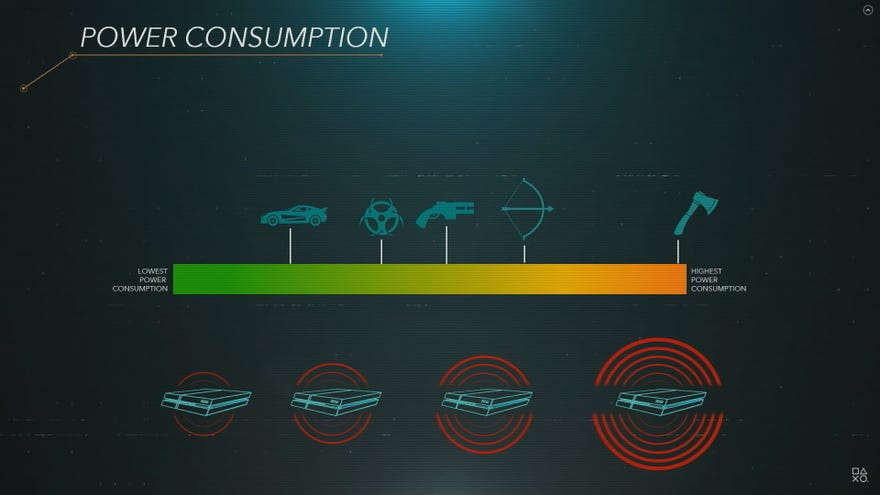

As big hardware spec reveals go, yesterday's PS5 specs deep-dive was pretty darn dry. Sony's Mark Cerny spent at least 50% of the 50-odd minute presentation talking about the console's SSD, and we still don't have any idea what the thing actually looks like. It was just abstract graph after abstract graph, a bit like the one up the top there, which I think is meant to portray how certain games affect the PS4's fan noise and power consumption. "Yep, axes are definitely more power intensive than cars, that sounds about right, yep, for sure," she says nodding sagely.

Nonsensical graphs aside, though, Cerny did thankfully spend a bit of time talking about the PS5's GPU, which, like the GPU inside the Xbox Series X, is based on a custom version of one of the upcoming AMD Navi GPUs. Admittedly, the Xbox Series X reveal earlier in the week had slightly juicier information about what we can expect from AMD's "Big Navi" graphics cards when they come to PC, but there were still a couple of interesting titbits in Sony's PS5 presentation that are worth taking a closer look at. For starters, it looks like 16GB VRAM cards will be very much a feature of AMD's 4K line-up. Here's what you need to know.

Our friends at Digital Foundry have even more info on the PS5 specs and Xbox Series X specs if you feel so inclined, but I've summarised the headline specs that concern us PC folk in the table below.

| GPU Specs | Xbox Series X | PS5 |

|---|---|---|

| Compute Units | 52 | 36 |

| Clock speed | 1825MHz (fixed) | 2230MHz (variable) |

| Memory | 16GB GDDR6 | 16GB GDDR6 |

| Memory Bandwidth | 10GB at 560GB/s, 6GB at 336GB/s | 448GB/s |

As you can seem, both GPUs will have 16GB of GDDR6 memory at their disposal, which is quite the step up from the 8GB currently installed on their RX 5700 and RX 5700 XT desktop GPUs. Heck, even Nvidia's RTX 2080 and RTX 2080 Super cards only have 8GB of GDDR6 memory to their name, while the RTX 2080 Ti has 11GB of the stuff.

Indeed, the only consumer PC graphics card to have ever had 16GB of memory before is AMD's Radeon 7, although that had 16GB of HBM2 (2nd Gen High Bandwidth Memory) rather than GDDR6. Of course, just because a graphics card has oodles of memory doesn't mean it's necessarily more powerful than those that don't. The Radeon 7, for example, wasn't really that much more powerful than Nvidia's RTX 2080 when I reviewed it last year, and its 4K performance has since been overtaken by the RTX 2080 Super as well.

However, the fact that both of the next-gen consoles will have 16GB of memory implies that AMD will be holding fast to this particular number in their Big Navi line-up, and I wouldn't be surprised if we saw multiple 4K desktop GPUs with the same amount of memory. After all, the fact that both consoles' GPUs have a different number of compute units (or CUs) suggests that these aren't just two slightly different versions of the same thing being used here, but two completely different types of graphics parts.

The PS5 GPU is particularly intriguing, though, as it actually has a lower number of compute units than AMD's RX 5700 XT. This 1440p-oriented graphics card has 40 compute units, 8GB of GDDR6 memory and a clock speed of 1650MHz, giving it a memory bandwidth of 448GB/s - the same as the PS5's GPU. However, the clock speed on the PS5 GPU is really quite nippy. It's way faster than the one inside the Xbox Series X, and it's considerably quicker than the RX 5700 XT as well. This, according to Cerny, actually results in higher performance than a GPU with more compute units, but a lower clock speed.

In the presentation, for example, he gave the theoretical example of a GPU with 36 CUs clocked at 1000MHz and a GPU with 48 CUs clocked at 750MHz. Both, he said, would result in 4.6 Teraflops of power due to the way Teraflops are calculated (which is similar to what the PS4 Pro is capable of achieving), but that the 36 CU / 1000MHz GPU would be the one that gave better performance, because other GPU components tend to run faster when the GPU frequency or clock speed is higher - provided you can handle the resulting power and heat issues associated with it, that is.

Cerny admits that the PlayStation hasn't always excelled in this particular area - hence that weird weapon graph I used right at the top of this article - but it looks like the PS5 have been specifically designed to address these particular problems. Instead of running at a constant, fixed clock speed frequency, for example (like the Xbox Series X), and letting that dictate how much power the console draws, Cerny says the PS5 will do the opposite, running at pretty much full power with a variable clock speed. This should deliver a more consistent level of performance across the board, according to Cerny, which is important when each console needs to appear exactly the same as its next-door neighbour.

Cerny didn't go into detail about the PS5's cooling solution, admittedly - they're saving that for a later date - but these details do paint an interesting picture of how AMD's Big Navi desktop equivalents might function. After all, he did say early on in the GPU section of his presentation that, "If you see a similar discrete GPU available as a PC card at roughly the same time as we release our console, that means our collaboration with AMD succeeded in producing technology useful in both worlds." He did then go on to clarify that, "It doesn't mean that we at Sony simply put the PC part into our console," but it does still suggest that there will be a lot of similarities between them under the hood.

So what can we draw from this? It's possible, for instance, that we'll see some AMD Big Navi GPUs with a lower number of compute units but super high clock speeds (probably with some fairly hefty cooling solutions), or maybe they'll follow in general AMD tradition of being massive power hogs but with the new, added improvement of running like a whisper. Or both! As always, it's silly speculating too much until AMD announce something concrete themselves, but given what we already know about AMD's Big Navi GPUs and their goal of creating "uncompromising 4K gaming" performance, this may well be a very early glimpse of how they're hoping to achieve that compared to what Nvidia currently offer. Either way, the race for the ultimate bestest best graphics card looks like it could become properly fierce by the end of the year, and I will be waiting on tenterhooks to see how it all plays out.