Nvidia RTX: Everything you need to know about ray tracing, DLSS and Nvidia's next-gen graphics cards

There's a lot more to Nvidia's RTX graphics cards than just ray tracing

Nvidia's next generation of RTX graphics cards are finally here, and several of them have already made their way into my bestest best graphics card list thanks to their fast performance and powerful new features compared to their outgoing GTX counterparts.

But what makes an Nvidia RTX card better than a regular old GTX one, and how do they stack up compared to everything else? And what's all this nonsense about Turing, ray tracing and DLSS? In this guide, I reveal all. By the time we're done here, you'll know everything there is to know about Nvidia's RTX cards, what ray tracing and DLSS actually mean, and (hopefully) which card you should pick for your next upgrade. So let's crack on, shall we?

Nvidia RTX: What is it and what does it do?

Nvidia's RTX cards are the company's newest generation of graphics cards that will gradually succeed and replace their current crop of GTX graphics cards - specifically the GTX 10-series such as the GTX 1060 and the GTX 1080.

As for why these cards are called RTX as opposed to regular old GTX, that's down to their rather unique feature set. When Nvidia launched their first pair of RTX cards back in August 2018, there was a lot of hoo-hah about how their new Turing GPU architecture had finally brought real-time ray tracing to mainstream graphics cards. Powered by the new RT Cores (or ray tracing cores) inside their Turing GPUs, ray tracing is - you guessed it - what gives Nvidia's RTX cards their name.

But what does ray tracing actually mean? Essentially, it's a really fancy (and GPU-intensive) type of reflection technology that makes in-game light behave in exactly the same way as we experience it in real-life. It's not something particularly new - Pixar films have been using this technique for years, for example - but until now it's been practically impossible to achieve in games due to the sheer amount of time and computational effort it requires to render when you're constantly zipping the camera all over the place. I'll be going into more detail about what that actually looks like further down the page, but suffice it to say, it's pretty impressive stuff.

Ray tracing isn't the only thing that makes Nvidia's RTX cards special, though. There's also DLSS, Nvidia's nifty anti-aliasing / edge-smoothening tech that uses Turing's new AI-driven Tensor Cores and deep-learning know-how to help boost performance at higher resolutions. RTX cards are also capable of something known as variable rate shading, too, which lightens the load on the GPU even further by simulating certain chunks of the environment that don't require as much rendering detail, helping to push frame rates higher without a massive loss in image quality.

The bad news is that buying one of these new RTX cards isn't suddenly going to make your games look incredible or run at lightning-fast speeds. Instead, a lot of Turing's key feature set requires specific support from individual developers - and in the case of DLSS, a lot of neural network training from Nvidia themselves - and right now there's only a fairly small list of confirmed ray tracing and DLSS games so far.

That's not to say Nvidia's RTX cards aren't powerful in their own right, of course, as most of them still offer a decent step-up from Nvidia's last generation of GTX cards. Speaking of which...

Nvidia RTX specs and price

Below you'll find a table comparing all the specs of each Nvidia RTX card that's been released so far, as well as links to all our reviews for a more detailed look at their individual performance figures. Just click the pink links and you'll be whisked away to their review page.

| Graphics card | CUDA Cores | GDDR6 RAM | Memory Speed | Memory Bandwidth | Power | Price |

|---|---|---|---|---|---|---|

| Nvidia GeForce RTX 2060 | 1920 | 6GB | 14 Gbps | 336GB/s | 160W | £329 / $349 |

| Nvidia GeForce RTX 2060 Super | 2176 | 8GB | 14 Gbps | 448GB/s | 175W | £379 / $399 |

| Nvidia GeForce RTX 2070 | 2304 | 8GB | 14 Gbps | 448GB/s | 175W | £549 / $599 |

| Nvidia GeForce RTX 2070 Super | 2560 | 8GB | 14 Gbps | 448GB/s | 215W | £475 / $499 |

| Nvidia GeForce RTX 2080 | 2944 | 8GB | 14 Gbps | 448GB/s | 215W | £749 / $799 |

| Nvidia GeForce RTX 2080 Super | 3072 | 8GB | 15.5 Gbps | 496GB/s | 250W | £669 / $699 |

| Nvidia GeForce RTX 2080Ti | 4352 | 11GB | 14 Gbps | 616GB/s | 250W | £1099 / $1199 |

The only card we're really still waiting on at the moment is the inevitable RTX 2050 - although according to recent rumours, Nvidia may well be ditching their RTX moniker and headline ray tracing support for their next-gen budget card and reverting back to their old GTX naming convention with the so-called ( and not to mention highly confusing) GTX 1660. Of course, I'll update this with more correct information as and when it's officially announced by Nvidia. For now, though, let's focus on the Nvidia RTX cards we do know about.

In terms of display outputs, each card will also support 8K resolutions at 60Hz with HDR enabled - on a single screen, no less, thanks to their DisplayPort 1.4a ready ports, as well as 4K at 60Hz via their HDMI 2.0b outputs. They're also ready to support the new fancy USB-C tech, VirtualLink. This will be of particular import for VR users, as it means the card's USB-C output will be able to deliver all the power, display and all the data you need to use a VR headset from a single connector.

Nvidia's also hoping their Turing cards will be an appealing prospect for streamers, too, as they'll be able to encode 8K video at 30fps in HDR in real time for the first time, as well as get up to 25% bitrate savings when encoding with HEVC, and up to 15% savings in H.264. 4K streaming will also become a lot more practical, with Nvidia claiming you'll get better performance than a typical x264 encoding setting you might be using today, with 1% dropped frames when streaming to Twitch at 4K using a 40K bitrate, compared to 90% via x264, and 1% CPU utilization compared to x264's 73% at 4K over a 40K bitrate.

Nvidia RTX performance

Specs are all well and good, but the key question on everyone's lips is all to do with speed, speed, speed. How much faster are they than Nvidia's GTX graphics cards, and are they worth spending all that money on? In some cases, absolutely. You'll find more detailed performance figures in each RTX graphics card review, but the RTX 2060, for example, is an astonishingly good graphics card that's great value for money, and is easily the equal of Nvidia's current GTX 1070Ti, offering near 60fps perfection at 2560x1440 resolutions as well as absolutely flawless 60fps+ at 1920x1080 on maximum quality settings.

The RTX 2070, on the other hand, is a smidge faster than the GTX 1080, making it another great 1440p card as well as a decent 4K option, while the RTX 2080 isn't really that much better than the GTX 1080Ti - at least when it comes to raw performance, that is, and you disregard of all its fancy RTX features.

The RTX 2080Ti, meanwhile, is substantially faster than any other graphics card I've tested so far, and by far the mightiest card for 4K gaming at 60fps that's currently available.

I'll be doing more comparison pieces over the coming weeks, but for now, here's how a lot of them stack up against their GTX competition.

Nvidia RTX: What the hell is ray tracing and why should I care?

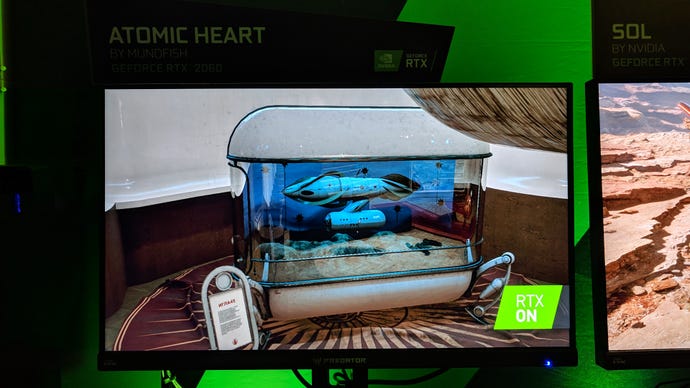

As I mentioned above, ray tracing is a really, really fancy light tech that makes shadows and reflections behave just how they do in real life. It's not proper ray tracing like you get in Pixar films, for example (that's still way beyond the capabilities of modern GPUs), but Nvidia's AI boffins have pretty much given us the next best thing - as my interview with Metro Exodus rendering programmer Ben Archard can attest. Have a look at Nvidia's Sol demo to see what I mean:

See how the light reflects off every single surface just like it should in real life, and how each reflection is capable of rendering objects that aren't even in that particular frame? That's what ray tracing can do, and it's only really when you compare games side by side that you realise how wrong they looked before when we didn't know any better.

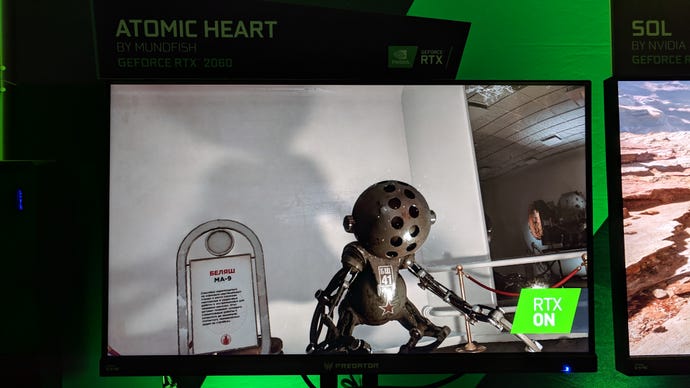

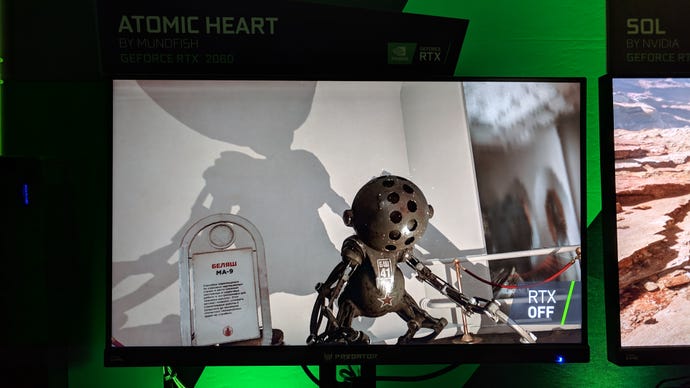

Ray tracing is also essential in creating all those soft shadow edges that simply aren't possible with regular lighting techniques. Have a look at these stills from Mundfish's upcoming Atomic Heart. You'll have to excuse the quality of my phone camera (dark demo rooms really aren't very conducive to good picture-taking), but the difference is like night and day.

At the moment, there are just a handful of confirmed RTX games that will be supporting Nvidia's ray tracing tech, but the demos I've seen so far have all been rather tasty-looking, from Shadow of the Tomb Raider's lively Day of the Dead scene to Battlefield V's exploding tank fire in the eyes of your enemies.

The bad news, yet again, is that ray tracing still takes a massive toll on the old performance levels. While I've yet to put Battlefield V through its paces, the Metro Exodus team said they were aiming for 60fps at just 1080p with all of its ray tracing tech turned on when I spoke to them back in August, suggesting you'll need one of the higher-end Nvidia RTX cards to really make the most of it.

Fortunately, Nvidia are working hard to ensure that Turing's other key feature can help pick up the slack. Enter DLSS.

Nvidia RTX DLSS: Turing's secret weapon

Fortunately, Nvidia's crammed a lot of other performance-boosting bits of AI tech inside their RTX cards that will hopefully negate any potential ray tracing impact if developers take advantage of them. Indeed, for all the attention given to ray tracing during Nvidia's RTX launch, it isn't nearly as interesting as Turing's other key feature, DLSS.

Also known as Deep Learning Super Sampling, this bit of tech is pretty damn neat. In essence, it's a super efficient form of anti-aliasing (AA) and uses Turing's AI and deep-learning-stuffed Tensor Cores to help take some of the strain off the GPU. AA, in case you're unfamiliar with the term, is a technique game makers use to make lines and edges appear smooth and natural instead of a million jagged pixels.

It's particularly important when playing games at 4K, as more common AA techniques, such as temporal anti-aliasing (TAA), can often soften images a bit too much (negating that lovely sharp feeling 4K should bring) as well as get things a bit wrong when scenes are happening at speed. Whether you'll notice any of this without the aid of a freeze-frame camera device, of course, is up for debate.

The key thing is that it can really help pump up those frame rate figures without much of a dip in image quality. It's only available in Final Fantasy XV at the moment, but here I found I was able to get around another 10-15fps out of it with DLSS turned on compared to the game's default AA options.

Much like ray tracing, however, DLSS is a separate feature that not only needs to have supported added by individual developers, but a hefty bit of input from Nvidia themselves as well, as they're the ones that need to train their clever AI neural network boffin machines on each game in the first place to make sure they're predicting (and therefore generating) the correct textures and images. Only once this process has been completed can DLSS support be enabled by developers.

Still, from what I've seen of DLSS so far, it's an incredibly impressive bit of tech that, if there's enough uptake, should give Nvidia's RTX cards a massive advantage over the upcoming Big Navi GPUs - especially if it can make up for the performance dip brought on by all that ray tracing malarky.

Nvidia RTX: Variable rate shading

Last but not least, variable rate shading is another clever bit of AI tech in Nvidia's RTX cards that can provide another performance boost to help keep those frame rates nice and high. It does this by concentrating the detail of a scene where it's needed most (in the centre, say, where you're focusing most of your attention), and reducing the level of detail elsewhere, such as things on the periphery or simpler bits of geometry in the foreground.

To give an example, imagine a scene from Forza Horizon 4. The car's in the middle, you've got that wonderful British countryside stretching out in front of you, fields whizzing by to your right and left, and the rest of the road trailing behind you.

Naturally, the car and horizon are the main focus of your attention, so these bits will be given the most detail. The fields to the side are less important, so they might have half as much shading given to them. The road, meanwhile, is definitely not where your attention should be focused in this high-octane racer, so that might receive half as much detail again. The end result is a scene that not only looks nice and sharp where it counts, but delivers a higher frame rate than if everything was being rendered with the same amount of detail.

If you're worried that this might make games look a bit inconsistent, fear not. Another example Nvidia showed me at Gamescom was the submarine area from Wolfenstein II: The New Colossus. Here, variable rate shading was being used to intelligently assess which bits of the scene to shade properly without a loss of overall quality.

Technically called 'content adaptive shading', this was arguably one of the most impressive demos I saw during Nvidia's entire presentation, and the difference between having it on and off was almost imperceptible. There was a vague sort of rippling effect present when I was standing stock still surveying the centre of the main control room, but it completely disappeared the moment I started to move. What's more, the frame rate leapt by 15-20fps when I had it turned on, which is pretty impressive for nigh-on identical image quality.

Nvidia RTX conclusion: Is it worth it?

There's no denying that Nvidia's RTX tech is impressive stuff. Every demo I've seen confirms it, as does what I've played and tested in actual games as well. The problem is that there are still far too few games that actually support ray tracing and / or DLSS to make it worth upgrading to one of Nvidia's RTX cards on those grounds alone. At time of writing, Battlefield V and Final Fantasy XV are the only games that have actually had their RTX support added in so far, and there's currently no word on when it will arrive for other already-released games such as Shadow of the Tomb Raider, or whether it will be ready in time for upcoming blockbusters such as Metro Exodus.

Then again, I think it's also fair to say that ray tracing and DLSS don't seem to be just passing fads for this particular generation of graphics cards, and I'd be very surprised indeed if support for Nvidia's RTX tech didn't continue to grow and become more widespread as the months and years roll on.

Until that happens, though, the only real reason to upgrade to an Nvidia RTX card right now is the basic performance boost they offer over their GTX predecessors, which is more compelling on some cards than it is on others. The RTX 2060 is definitely one of the better value RTX cards at the moment, but those looking for something even cheaper would do well to hold on a little bit longer until we see what's happening with this so-called GTX 1660, which still has a Turing-based GPU, according to the latest gossip from the rumour mill, but no ray tracing support.